In 2022, US chipmaker Nvidia will release the H100, one of its most powerful processors ever – and one of its most expensive, costing around $40,000. The launch seemed badly timed, as businesses sought to cut spending amid rampant inflation.

Then in November ChatGPT was launched.

“We went from a very difficult year last year to a period of transformation overnight,” said Jensen Huang, chief executive officer of Nvidia. He said OpenAI’s hit chatbot was an “aha moment”. “It created an immediate demand.”

The sudden popularity of ChatGPT has triggered an arms race among the world’s leading tech companies and start-ups, who are racing to get the H100, which Huang describes as “the world’s first computer”. [chip] Designed for “generative AI” – artificial intelligence systems that can quickly generate human-like text, images and content.

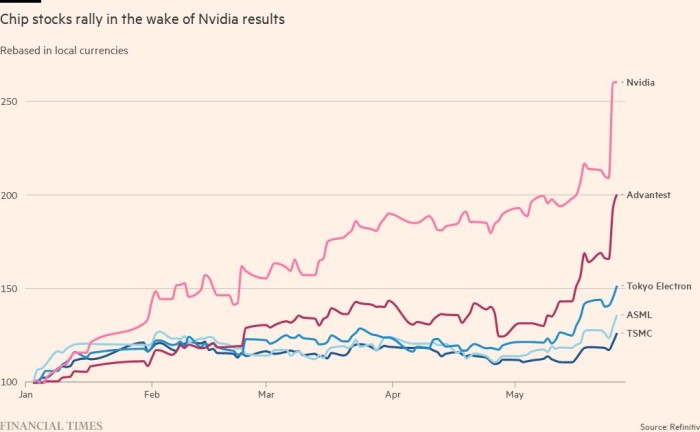

The importance of having the right product at the right time became clear this week. Nvidia announced Wednesday that its sales for the three months ending in July will hit $11 billion, 50 percent above Wall Street’s previous estimates, fueled by a revival in data center spending by Big Tech and demand for its AI chips. Inspired by

Investor reaction to the forecast added $184bn to the market capitalization of Nvidia in a single day on Thursday, which was already the world’s most valuable chip company nearing a $1tn valuation.

Nvidia is an early winner from the astronomical rise of generative AI, a technology that threatens to reshape industries, create huge productivity gains and displace millions of jobs.

That technological leap is set to be accelerated by the H100, which is based on a new Nvidia chip architecture called “Hopper” — named after American programming pioneer Grace Hopper — and is suddenly the hottest commodity in Silicon Valley. has become

“This whole thing started just as we were starting production on Hopper,” Huang said.

Huang’s confidence in continued profit comes from working with chip maker TSMC to be able to ramp up H100 production to meet explosive demand from cloud providers like Microsoft, Amazon and Google, Internet conglomerates like Meta and corporate customers.

“It’s one of the rarest engineering resources on the planet,” said Brannin McBee, chief strategy officer and founder of CoreWeave, an AI-focused cloud infrastructure start-up that was among the first to receive H100 shipments earlier this year. there was one.

Some customers have waited as long as six months to receive thousands of H100 chips to train their big data models. AI start-ups expressed concern that the supply of H100s would run out at the same time as demand was rising.

You are viewing a snapshot of an interactive graphic. This is probably due to being offline or JavaScript is disabled in your browser.

Elon Musk, who has bought thousands of Nvidia chips for his new AI start-up X.ai, told a Wall Street Journal event this week that GPUs (graphics processing units) are currently “much tougher than drugs”. , joking that “San Francisco never really had a high bar”.

“The cost of computation has become astronomical,” Musk said. “must have minimum ex $250mn server hardware [to build generative AI systems],

The H100 is proving particularly popular with big tech companies like Microsoft and Amazon, which are building entire data centers focused on AI workloads, and generative-AI start-ups like OpenAI, Anthropic, Stability AI and Inflection AI , as it promises high performance. Which can accelerate product launch or reduce training costs over time.

“In terms of getting access, yes, this is what it looks like to ramp up a new architecture GPU,” said Ian Buck, head of Nvidia’s hyperscale and high-performance computing business. “It’s happening at scale,” he said, with some large customers looking for tens of thousands of GPUs.

The unusually large chip, an “accelerator” designed to operate in data centers, contains 80 billion transistors, five times more than the processors powering the latest iPhones. While it’s twice as expensive as its predecessor, the A100 released in 2020, early adopters say the H100 boasts at least three times better performance.

“The H100 solves the scalability question that has been troubling [AI] model creators,” said Emad Mostak, co-founder and chief executive of Stability AI, one of the companies behind the stable diffusion image generation service. “This is important because it lets us all train larger models faster as it moves from a research to an engineering problem.”

While the timing of the H100’s launch was ideal, Nvidia’s success in AI can be traced back nearly two decades to an innovation in software rather than silicon.

Its CUDA software, created in 2006, allows the GPU to be reused as an accelerator for other types of workloads beyond graphics. Then around 2012, Buck explained, “AI found us.”

Researchers in Canada realized that GPUs were ideally suited for building neural networks, a form of AI based on the way neurons interact in the human brain, which were then becoming a new focus for AI development. “It took about 20 years to get to where we are today,” Buck said.

Nvidia now has more software engineers than hardware engineers, enabling it to support the many different types of AI frameworks that have emerged in subsequent years and to integrate its chips into the statistical calculations needed to train AI models. make more efficient.

recommended

Hopper was the first architecture optimized for “Transformers”, the approach to AI that underlies OpenAI’s “Generative Pre-Trained Transformer” chatbot. Nvidia’s close work with AI researchers allowed it to identify Transformers’ emergence in 2017 and begin tuning its software accordingly.

“Nvidia arguably saw the future before everyone else with its pivot into making GPUs programmable,” said Nathan Benaich, general partner at Air Street Capital, an investor in AI start-ups. “It saw an opportunity and bet big and consistently outperformed its competitors.”

Benaich estimates that Nvidia has a two-year lead over its rivals, but says: “Its positioning is unassailable on both the hardware and software fronts.”

Mostak of Sustainability AI agrees. “Next-generation chips from Google, Intel and others are catching up [and] Even CUDA becomes less of a gap because the software is standardized.

For some in the AI industry, Wall Street’s enthusiasm this week looks overly optimistic. Yet “for the time being,” said Jay Goldberg, founder of chip consultancy D2D Advisory, “the AI market looks set to remain a winner-takes-all market for Nvidia.”

Additional reporting by Madhumita Murgia